| Algorithm

arrayMax(A, n) Input: array A of n integers Output: maximum element of A currentMax <- A[0] for i <- 1 to n−1 do if A[i] > currentMax then currentMax <- A[i] return currentMax |

| Algorithm arrayMax(A, n) | Number of

operations |

| currentMax <- A[0] | 2 |

| for i <- 1 to n−1 do | 2 + n |

| if A[i] > currentMax then | 2(n − 1) |

| currentMax <- A[i] | 2(n − 1) |

| { increment counter i} | 2(n −1) |

|

return currentMax |

1 |

| |

Total 7n − 1 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| Algorithm

prefixAverages1(X, n) Input array X of n integers Output array A of prefix averages of X A <- new array of n integers for i <- 0 to n−1 do s <- X[0] for j <- 1 to i do s <- s+X[j] A[i] <- s/(i+1) return A |

#operations n n n 1 + 2 + …+(n−1) 1 + 2 + …+(n−1) n 1 |

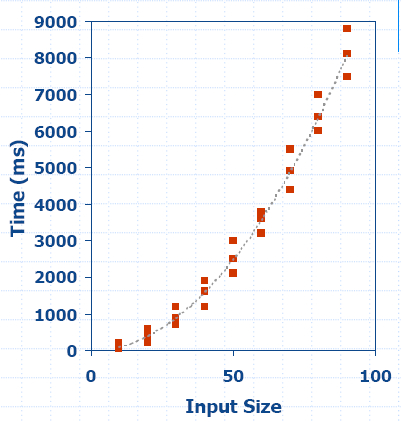

The following algorithm computes prefix averages

in linear time by keeping a running sum.

| Algorithm

prefixAverages2(X, n) Input array X of n integers Output array A of prefix averages of X A <- new array of n integers s <- 0 for i <- 0 to n−1 do s <- s + X[i] A[i] <- s/(i + 1) return A |

#operations n 1 n n n 1 |

Algorithm prefixAverages2 runs in O(n) time.