|

|

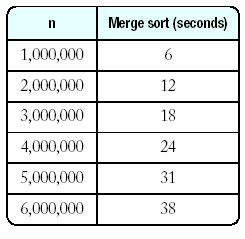

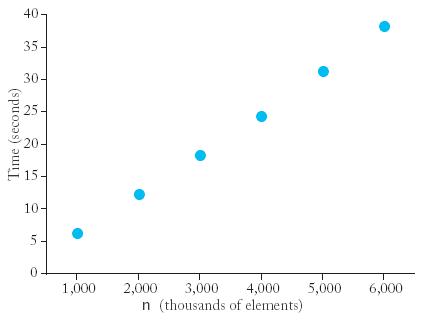

Time now

Time before;

selection_sort(v);

Time after;

cout << "Elapsed time = " << after.seconds_from(before)

<< " seconds\n";

|

|

because

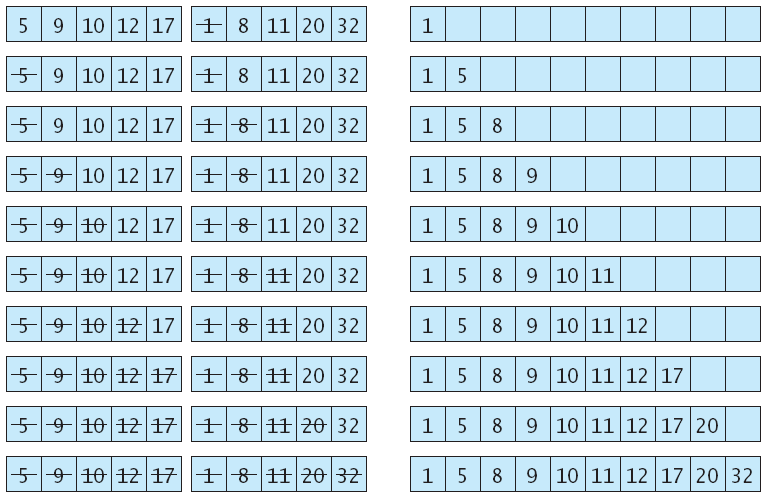

void merge_sort(vector<int>& a, int from, int to)

{ if (from == to) return;

int mid = (from + to) / 2;

/* sort the first and second half */

merge_sort(a, from, mid);

merge_sort(a, mid + 1, to);

merge(a, from, mid, to);

}

Merge Sort (mergsort.cpp)

|

|

int binary_search(vector<Employee>& v, int from, int to, string n)

{ if (from > to) return -1;

int mid = (from + to) / 2;

if (v[mid].get_name() == n) return mid;

else if (v[mid].get_name() < n)

return binary_search(v, mid + 1, to, n);

else

return binary_search(v, from, mid -1 , n);

}